FEATURED PUBLICATIONS

SECURE: Semantics-aware embodied conversation under unawareness for lifelong robot learning

Rimvydas Rubavicius, Peter David Fagan, Alex Lascarides, Subramanian Ramamoorthy

School of Informatics, University of Edinburgh, UK

Published in the Proceedings of the Fourth Conference on Lifelong Learning agents (CoLLAs) 2025

Abstract: This paper addresses a challenging interactive task learning scenario we call rearrangement under unawareness: an agent must manipulate a rigid-body environment without knowing a key concept necessary for solving the task and must learn about it during deployment. For example, the user may ask to “put the two granny smith apples inside the basket”, but the agent cannot correctly identify which objects in the environment are “granny smith” as the agent has not been exposed to such a concept before. We introduce SECURE, an interactive task learning policy designed to tackle such scenarios. The unique feature of SECURE is its ability to enable agents to engage in semantic analysis when processing embodied conversations and making decisions. Through embodied conversation, a SECURE agent adjusts its deficient domain model by engaging in dialogue to identify and learn about previously unforeseen possibilities. The SECURE agent learns from the user’s embodied corrective feedback when mistakes are made and strategically engages in dialogue to uncover useful information about novel concepts relevant to the task. These capabilities enable the SECURE agent to generalize to new tasks with the acquired knowledge. We demonstrate in the simulated Blocksworld and the real-world apple manipulation environments that the SECURE agent, which solves such rearrangements under unawareness, is more data-efficient than agents that do not engage in embodied conversation or semantic analysis.

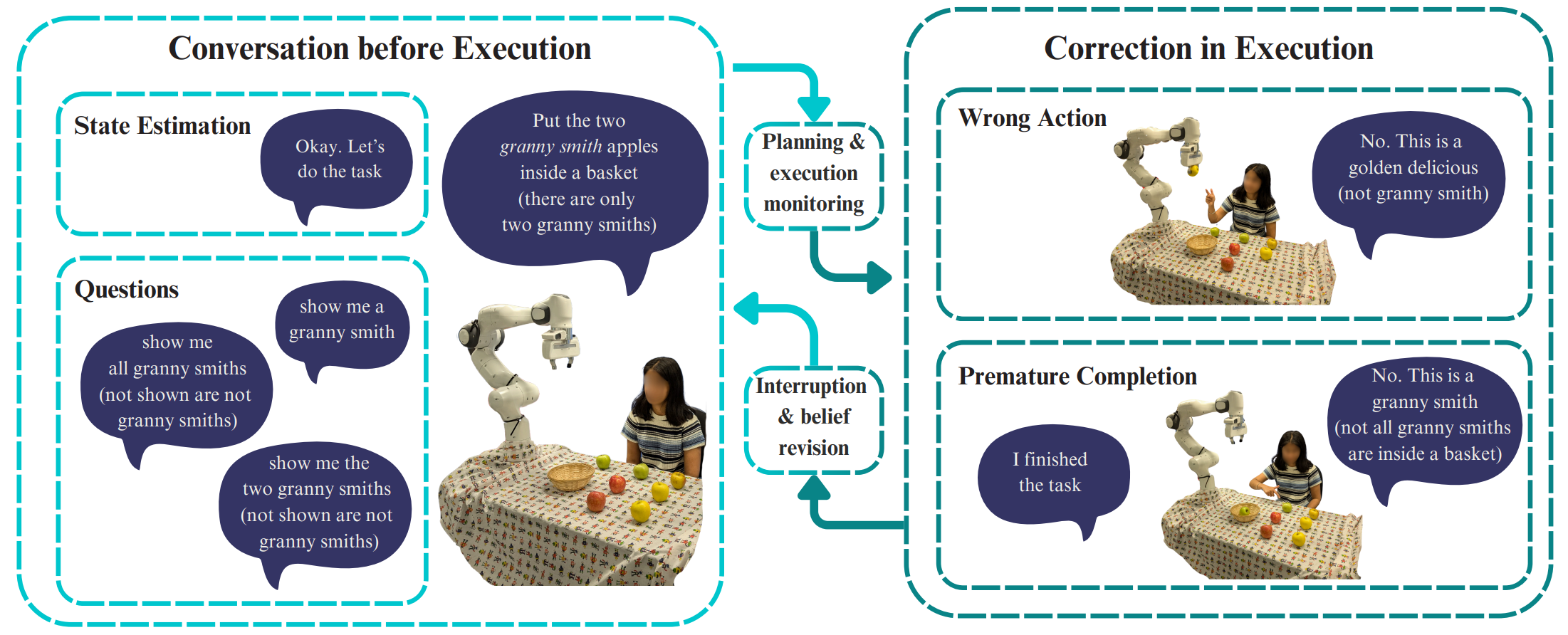

Figure 1: Framework overview. The agent interprets embodied conversation with the user in order to update its beliefs and solve tasks under unawareness: that is, an expression like “granny smith”, which is a part of the user’s instruction, is not in the agent’s vocabulary, and the concept “granny smith” denotes is not a part of the agent’s hypothesis space of possible domain models. Our framework enables the agent to exchange embodied messages with the user before attempting to solve the task; the expert can also provide feedback on the agent’s actions in the environment during task execution. For conversation before execution, the agent issues questions (e.g., “show me a granny smith”) to reduce its uncertainty about the domain. When executing the task, the user responds to a suboptimal action with embodied corrective feedback, e.g., “No. This is a golden delicious”. Such corrections can occur in case of a wrong action (e.g., picking “golden delicious” instead of “granny smith”) or premature completion (declaring that the goal state is reached when it is not.). Such feedback exposes the agent’s false beliefs (being confident but wrong about the state). It triggers execution interruption and belief revision. In both cases, our framework processes embodied conversation in a semantics-aware manner: the user’s messages are augmented with their logical consequences (shown in brackets in this figure). They are used to update the agent’s beliefs, which in turn affects their decision-making.